SynxDB 2 Documentation

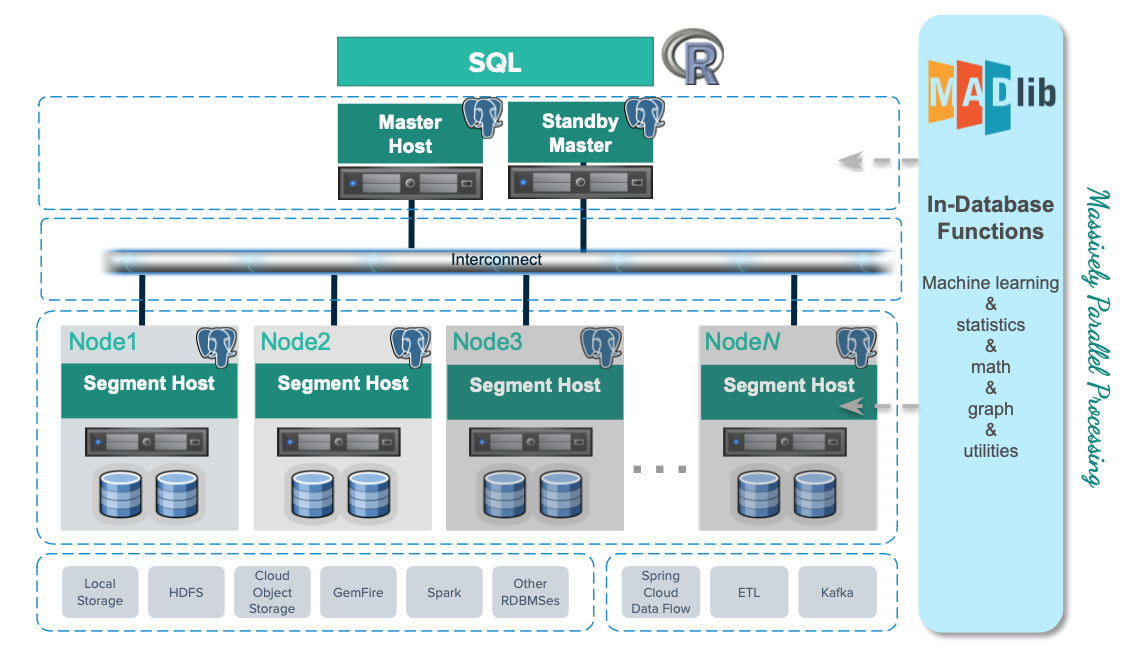

This site provides complete documentation for SynxDB 2, a high-performance, open-source MPP (Massively Parallel Processing) database designed for large-scale analytics. SynxDB is an enterprise-grade database with a scalable architecture, and can act as a drop-in replacement for Greenplum 6 to seamless migration without change your existing workloads.

Note Greenplum® is a registered trademark of Broadcom Inc. Synx Data Labs and SynxDB are not affiliated with, endorsed by, or sponsored by Broadcom, Inc. Any references to Greenplum are for comparative, educational, and interoperability purposes only.

Quick-Start Installation

This guide provides simple instructions for installing SynxDB 2 on a host machine.

Note For detailed instructions on preparing host machines and deploying SynxDB in a production environment, see Installing and Upgrading SynxDB.

Security Considerations

The installation procedure follows a structured RPM-based approach, similar to EPEL, in order to secure dependency management and updates. Synx Data Labs maintains high security standards through:

- Cryptographically signed RPM packages

- Signed repository metadata

- GPG key verification

- Package signature validation at multiple stages

All artifacts used in the installation process are cryptographically signed to ensure package integrity and authenticity.

Prerequisites

To install SynxDB you require:

- A supported EL9-compatible operating system (RHEL 9, Rocky Linux 9, Oracle Linux 9, AlmaLinux 9) or EL8-compatible operating system (RHEL 8, Rocky Linux 8, Oracle Linux 8, AlmaLinux 8), or EL8-compatible operating system (RHEL 7, CentOS 7).

rootaccess to each host system. This procedure assumes that you are logged in as therootuser. As an alternative, prependsudoto each command if you choose to install as a non-root user.- The

wgetutility. If necessary installwgeton each host with the command:dnf install wget - Internet access to Synx Data Labs repositories. This guide assumes that each host can access the Synx Data Labs repositories. If your environment restricts internet access, or if you prefer to host repositories within your infrastructure to ensure consistent package availability, contact Synx Data Labs to obtain a complete repository mirror for local hosting.

Procedure

Follow these steps to securely install SynxDB to your system:

-

Login to your Enterprise Linux 8 or 9 system as the

rootuser. -

Import the Synx Data Labs GPG key so you can use it to validate downloaded packages:

wget -nv https://synxdb-repo.s3.us-west-2.amazonaws.com/gpg/RPM-GPG-KEY-SYNXDB rpm --import RPM-GPG-KEY-SYNXDB -

Verify that you have imported the keys:

rpm -q gpg-pubkey --qf "%{NAME}-%{VERSION}-%{RELEASE} %{SUMMARY}\n" | grep SynxDBYou should see output similar to:

gpg-pubkey-df4bfefe-67975261 gpg(SynxDB Infrastructure <infrastructure@synxdata.com>) -

Download the SynxDB repository package:

wget -nv https://synxdb-repo.s3.us-west-2.amazonaws.com/repo-release/synxdb2-release-1-1.rpm -

Verify the package signature of the repository package you just downloaded.

rpm --checksig synxdb2-release-1-1.rpmEnsure that the command output shows that the signature is OK. For example:

synxdb2-release-1-1.rpm: digests signatures OK -

After verifying the package signature, install the SynxDB repository package. For Enterprise Linux 9:

dnf install -y synxdb2-release-1-1.rpmThe repository installation shows details of the installation process similar to:

Last metadata expiration check: 2:11:29 ago on Mon Mar 10 18:53:32 2025. Dependencies resolved. ========================================================================= Package Architecture Version Repository Size ========================================================================= Installing: synxdb-release noarch 1-1 @commandline 8.1 k Transaction Summary ========================================================================= Install 1 Package Total size: 8.1 k Installed size: 0 Downloading Packages: Running transaction check Transaction check succeeded. Running transaction test Transaction test succeeded. Running transaction Preparing : 1/1 Running scriptlet: synxdb2-release-1-1.noarch 1/1 Installing : synxdb2-release-1-1.noarch 1/1 Verifying : synxdb2-release-1-1.noarch 1/1 Installed: synxdb2-release-1-1.noarch Complete!Note: The

-yoption in thednf installcommand automatically confirms and proceeds with installing the software as well as dependent packages. If you prefer to confirm each dependency manually, omit the-yflag. -

After you have installed the repository package, install SynxDB with the command:

dnf install -y synxdbThe installation process installs all dependencies required for SynxDB 2 in addition to the SynxDB software.

-

Verify the installation with:

rpm -qi synxdbYou should see installation details similar to:

Name : synxdb Version : 2.27.2 Release : 1.el8 Architecture: x86_64 Install Date: Fri Mar 14 17:22:59 2025 Group : Applications/Databases Size : 1541443881 License : ASL 2.0 Signature : RSA/SHA256, Thu Mar 13 10:36:01 2025, Key ID b783878edf4bfefe Source RPM : synxdb-2.27.2-1.el8.src.rpm Build Date : Thu Mar 13 09:55:50 2025 Build Host : cdw Relocations : /usr/local/synxdb Vendor : Synx Data Labs, Inc. URL : https://synxdatalabs.com Summary : High-performance MPP database for enterprise analytics Description : SynxDB is a high-performance, enterprise-grade, massively parallel processing (MPP) database designed for advanced analytics on large-scale data sets. Derived from PostgreSQL and the last open-source version of Greenplum, SynxDB offers seamless compatibility, powerful analytical capabilities, and robust security features. Key Features: - Massively parallel processing for optimized query performance - Advanced analytics for complex data workloads - Seamless integration with ETL pipelines and BI tools - Broad compatibility with diverse data sources and formats - Enhanced security and operational reliability Disclaimer & Attribution: SynxDB is derived from the last open-source version of Greenplum, originally developed by Pivotal Software, Inc., and maintained under Broadcom Inc.'s stewardship. Greenplum® is a registered trademark of Broadcom Inc. Synx Data Labs, Inc. and SynxDB are not affiliated with, endorsed by, or sponsored by Broadcom Inc. References to Greenplum are provided for comparative, interoperability, and attribution purposes in compliance with open-source licensing requirements. For more information, visit the official SynxDB website at https://synxdatalabs.com.Also verify that the

/usr/local/synxdbdirectory points to the specific version of SynxDB that you downloaded:ls -ld /usr/local/synxdb*For version 2.27.2 the output is:

lrwxrwxrwx 1 root root 24 Feb 19 10:05 /usr/local/synxdb -> /usr/local/synxdb-2.27.2 drwxr-xr-x 10 root root 4096 Mar 10 21:07 /usr/local/synxdb-2.27.2 -

If you have not yet created the

gpadminadministrator user and group, execute these steps:# groupadd gpadmin # useradd gpadmin -r -m -g gpadmin # passwd gpadmin New password: <changeme> Retype new password: <changeme> -

Login as the

gpadminuser and set the SynxDB environment:su - gpadmin source /usr/local/synxdb/synxdb_path.sh -

Finally, verify that the following SynxDB executable paths and versions match the expected paths and versions for your installation:

# which postgres /usr/local/synxdb-2.27.2/bin/postgres # which psql /usr/local/synxdb-2.27.2/bin/psql # postgres --version postgres (SynxDB) 9.4.26 # postgres --gp-version postgres (SynxDB) 6.27.2+SynxDB_GA build 1 # psql --version psql (PostgreSQL) 9.4.26

Note: If you are running a multi-node SynxDB cluster, execute the above commands on each host machine in your cluster.

At this point, you have installed and configured SynxDB on your Enterprise Linux system(s). The database is now ready for initialization and configuration using the SynxDB documentation.

Contact Synx Data Labs support at info@synxdata.com for any troubleshooting any installation issues.

Automating Multi-Node Deployments

You can use various automation tools to streamline the process of installing SynxDB to multiple hosts. Follow these recommended approaches:

Using Ansible

Ansible allows you to automate installation across all nodes in the cluster using playbooks.

- Create an Ansible inventory file listing all nodes.

- Develop a playbook to:

- Install the SynxDB repository package.

- Install SynxDB using

dnf. - Verify installation across all nodes.

- Run the playbook to automate deployment.

Using a Bash Script with SSH

For environments without Ansible, a simple Bash script can help distribute installation tasks. An installation script should:

- Define a list of nodes in a text file (e.g.,

hosts.txt). - Use a loop in the script to SSH into each node and run installation commands.

The following shows the structure of an example bash script for installation:

for host in $(cat hosts.txt); do

ssh gpadmin@$host "sudo dnf install -y synxdb"

done

SynxDB 2.x Release Notes

This document provides important release information for all Synx Data Labs SynxDB 2.x releases.

SynxDB 2.x software is available from the Synx Data Labs repository, as described in Quick-Start Installation.

Release 2.27

Release 2.27.2

Release Date: 2025-03-16

SynxDB 2.27.2 is the first generally-available release of SynxDB 2. SynxDB 2 is an enterprise-grade database with a scalable architecture, and can act as a drop-in replacement for Greenplum 6 to seamless migration without change your existing workloads. See the Drop-In Replacement Guide for Greenplum 6 for details.

SynxDB 2.27.2 is based on the last open source Greenplum 6.27.2 software release.

Known Issues and Limitations

SynxDB 2 has these limitations:

- PXF is not currently installed with the SynxDB 2 rpm.

- Additional extensions such as MADlib and PostGIS are not yet install with the SynxDB 2 rpm.

Disclaimer & Attribution

SynxDB is derived from the last open-source version of Greenplum, originally developed by Pivotal Software, Inc., and maintained under Broadcom Inc.’s stewardship. Greenplum® is a registered trademark of Broadcom Inc. Synx Data Labs, Inc. and SynxDB are not affiliated with, endorsed by, or sponsored by Broadcom Inc. References to Greenplum are provided for comparative, interoperability, and attribution purposes in compliance with open-source licensing requirements.

For more information, visit the official SynxDB website at https://synxdata.com.

Drop-In Replacement Guide for Greenplum 6

SynxDB 2 provides feature parity with the last open source release of Greenplum 6. If you used open source Greenplum 6 or proprietary Greenplum 6 without gpcc or QuickLZ compression, you can install SynxDB 2 alongside your existing Greenplum installation and dynamically switch between each environment to validate performance and functionality. If you wish to migrate to SynxDB 2 but currently use the proprietary features of Greenplum 6, follow the pre-migration guide to prepare your existing Greenplum deployment for migration to SynxDB 2.

Pre-Migration Procedure

This guide helps you identify and address Greenplum 6 proprietary features before you migrate to SynxDB 2, or before you install SynxDB 2 as a drop-in replacement to Greenplum 6.

Prerequisites

Before you make any configuration changes to your Greenplum 6 system:

- Perform a full backup of your data.

- Backup the

postgresql.conffiles from each segment data directory. - Document any existing configuration changes that you have made to Greenplum 6 or to Greenplum 6 host machines.

- Test all potential changes in a development environment before you apply them to a production system.

While this guide focuses on identifying and addressing proprietary features, note that subsequent migration steps require external access to the Synx Data Labs repository. If your environment restricts internet access, or if you prefer to host repositories within your infrastructure to ensure consistent package availability, contact Synx Data Labs to obtain a complete repository mirror for local hosting.

About Proprietary Greenplum Features

The key proprietary features to address before migrating to SynxDB 2 are Greenplum Command Center (GPCC) and QuickLZ compression.

Greenplum Command Center (GPCC)

Greenplum Command Center is a Broadcom proprietary offering that is not available in SynxDB. The primary concern during migration is the presence of the GPCC metrics_collector library is configured in shared_preload_libraries. If this library is present, the SynxDB 2 cluster will fail to start after you install SynxDB as a drop-in replacement.

Detection

To check if metrics_collector is configured in shared_preload_libraries execute the command:

gpconfig -s shared_preload_libraries

If metrics_collector appears in the output, follow the remediation steps.

Remediation

Caution: Backup the

postgresql.conffiles from all segment data directories before you make any changes.

Follow these steps to remove metrics_collector from your installation:

-

Use

gpconfigto removemetrics_collectorfromshared_preload_libraries.If

metrics_collectorwas the only entry shown in thegpconfig -soutput, remove it using the command:gpconfig -r shared_preload_librariesIf

metrics_collectorappeared with other shared libraries, use the command form:gpconfig -c shared_preload_libraries -v "comma,separated,list"Replace “comma,separated,list” with only those libraries that you want to continue using.

-

Restart the Greenplum cluster for the changes to take effect.

-

Verify that

metrics_collectorno longer appears in the configuration:gpconfig -s shared_preload_libraries

2. QuickLZ Compression

The QuickLZ compression algorithm is proprietary to Broadcom, and is not available in SynxDB. Before beginning any migration, you must identify where QuickLZ compression is being used in your environment.

Detection

Run the following script as gpadmin to identify QuickLZ usage across all databases:

#!/bin/bash

echo "Checking if QuickLZ is in use across all databases..."

for db in $(psql -t -A -c "SELECT datname FROM pg_database WHERE datistemplate = false;"); do

quicklz_count=$(psql -d $db -X -A -t -c "

SELECT COUNT(*)

FROM pg_attribute_encoding, LATERAL unnest(attoptions) AS opt

WHERE opt = 'compresstype=quicklz';

")

if [ "$quicklz_count" -gt 0 ]; then

echo "QuickLZ is in use in database: $db ($quicklz_count columns)"

else

echo "QuickLZ is NOT in use in database: $db"

fi

done

This script checks each non-template database and reports whether QuickLZ compression is in use, along with the number of affected columns.

The presence of QuickLZ compression requires careful consideration in migration planning, as it is not supported in SynxDB. If QuickLZ is detected, you will need to analyze and plan changing to an alternate compression algorithm before you can migrate to SynxDB. Contact Synx Data Labs for help with planning considerations.

Disclaimer & Attribution

SynxDB is derived from the last open-source version of Greenplum, originally developed by Pivotal Software, Inc., and maintained under Broadcom Inc.’s stewardship. Greenplum® is a registered trademark of Broadcom Inc. Synx Data Labs, Inc. and SynxDB are not affiliated with, endorsed by, or sponsored by Broadcom Inc. References to Greenplum are provided for comparative, interoperability, and attribution purposes in compliance with open-source licensing requirements.

Replacement and Fallback Procedures

This topic describes the process of replacing a running Greenplum 6 installation with SynxDB 2 while maintaining fallback capability.

Important Notes

- The Greenplum 6 installation is intentionally preserved to enable fallback if needed.

- The drop-in replacement process uses symbolic links to switch between Greenplum and SynxDB.

- Always start a new

gpadminshell after switching between versions to ensure proper environment setup.

Prerequisites

Before you make any configuration changes to your Greenplum 6 system:

- Perform the SynxDB 2: Pre-Migration Procedure to ensure that GPCC and QuickLZ are not being used in your Greenplum 6 installation.

- Perform a full backup of your data.

- Backup the

postgresql.conffiles from each segment data directory. - Document any existing configuration changes that you have made to Greenplum 6 or to Greenplum 6 host machines.

- Create an

all_hosts.txtfile that lists each hostname in the Greenplum 6 cluster. - Ensure that the

gpadminuser has sudo access to all cluster hosts. - Preserve the existing Greenplum 6 installation for fallback capability.

This guide assumes that each host can access the Synx Data Labs repositories. If your environment restricts internet access, or if you prefer to host repositories within your infrastructure to ensure consistent package availability, contact Synx Data Labs to obtain a complete repository mirror for local hosting.

Installation and Start-up Procedure

Follow these steps to install the SynxDB software to Greenplum 6 hosts, and then start the SynxDB cluster.

1. Import the SynxDB GPG Key Across Cluster

This step establishes trust for signed SynxDB packages across your cluster:

# Download and verify GPG key

gpssh -f ~/all_hosts.txt -e 'wget -nv https://synxdb-repo.s3.us-west-2.amazonaws.com/gpg/RPM-GPG-KEY-SYNXDB'

# Import the verified key into the RPM database

gpssh -f ~/all_hosts.txt -e 'sudo rpm --import RPM-GPG-KEY-SYNXDB'

# Verify the key was imported correctly

gpssh -f ~/all_hosts.txt -e 'rpm -q gpg-pubkey --qf "%{NAME}-%{VERSION}-%{RELEASE} %{SUMMARY}\n" | grep SynxDB'

2. Install the SynxDB Repository

Each package is verified for authenticity and integrity:

# Download release package

gpssh -f ~/all_hosts.txt -e 'wget -nv https://synxdb-repo.s3.us-west-2.amazonaws.com/repo-release/synxdb2-release-1-1.rpm'

# Verify package signature against imported GPG key

gpssh -f ~/all_hosts.txt -e 'rpm --checksig synxdb2-release-1-1.rpm'

# Install repository package

gpssh -f ~/all_hosts.txt -e 'sudo dnf install -y synxdb2-release-1-1.rpm'

# Verify repository installation

gpssh -f ~/all_hosts.txt -e 'sudo dnf repolist'

gpssh -f ~/all_hosts.txt -e 'rpm -qi synxdb-release'

3. Install SynxDB

# Install SynxDB package

gpssh -f ~/all_hosts.txt -e 'sudo dnf install -y synxdb'

# Verify installation

gpssh -f ~/all_hosts.txt -e 'ls -ld /usr/local/synxdb*'

gpssh -f ~/all_hosts.txt -e 'rpm -q synxdb'

gpssh -f ~/all_hosts.txt -e 'rpm -qi synxdb'

4. Verify the Current Greenplum 6 Installation

psql -c 'select version()'

which postgres

postgres --version

postgres --gp-version

which psql

psql --version

5. Stop the Greenplum Cluster

gpstop -a

6. Configure SynxDB as a Drop-in Replacement

# Create symbolic links for drop-in replacement

gpssh -f ~/all_hosts.txt -e 'sudo rm -v /usr/local/greenplum-db'

gpssh -f ~/all_hosts.txt -e 'sudo ln -s /usr/local/synxdb /usr/local/greenplum-db'

gpssh -f ~/all_hosts.txt -e 'sudo ln -s /usr/local/synxdb/synxdb_path.sh /usr/local/synxdb/greenplum_path.sh'

7. Start the Cluster using SynxDB

⚠️ IMPORTANT: Start a new gpadmin shell session before proceeding. This ensures that:

- Old environment variables are cleared

- The new environment is configured via

/usr/local/greenplum-db/greenplum_path.sh - The correct binaries are referenced in

PATH

# In your new gpadmin shell:

gpstart -a

# Verify SynxDB is running

psql -c 'select version()'

which postgres

postgres --version

postgres --gp-version

which psql

psql --version

Fallback Procedure

If necessary, you can revert to using the Greenplum 6 software by following these steps.

1. Stop the Cluster

gpstop -a

2. Restore the Greenplum Symbolic Links

# Adjust the version number to match your Greenplum installation

gpssh -f ~/all_hosts.txt -e 'sudo rm -v /usr/local/greenplum-db'

gpssh -f ~/all_hosts.txt -e 'sudo ln -s /usr/local/greenplum-db-6.26.4 /usr/local/greenplum-db'

3. Start the Cluster with Greenplum 6

⚠️ IMPORTANT: Start a new gpadmin shell session before proceeding. This ensures that:

- Old environment variables are cleared

- The new environment is configured via

/usr/local/greenplum-db/greenplum_path.sh - The correct binaries are referenced in

PATH

# In your new gpadmin shell:

gpstart -a

# Verify Greenplum is running

psql -c 'select version()'

which postgres

postgres --version

postgres --gp-version

which psql

psql --version

Disclaimer & Attribution

SynxDB is derived from the last open-source version of Greenplum, originally developed by Pivotal Software, Inc., and maintained under Broadcom Inc.’s stewardship. Greenplum® is a registered trademark of Broadcom Inc. Synx Data Labs, Inc. and SynxDB are not affiliated with, endorsed by, or sponsored by Broadcom Inc. References to Greenplum are provided for comparative, interoperability, and attribution purposes in compliance with open-source licensing requirements.

SynxDB Concepts

This section provides an overview of SynxDB components and features such as high availability, parallel data loading features, and management utilities.

- About the SynxDB Architecture

SynxDB is a massively parallel processing (MPP) database server with an architecture specially designed to manage large-scale analytic data warehouses and business intelligence workloads. - About Management and Monitoring Utilities

SynxDB provides standard command-line utilities for performing common monitoring and administration tasks. - About Concurrency Control in SynxDB

SynxDB uses the PostgreSQL Multiversion Concurrency Control (MVCC) model to manage concurrent transactions for heap tables. - About Parallel Data Loading

This topic provides a short introduction to SynxDB data loading features. - About Redundancy and Failover in SynxDB

This topic provides a high-level overview of SynxDB high availability features. - About Database Statistics in SynxDB

An overview of statistics gathered by the ANALYZE command in SynxDB.

About the SynxDB Architecture

SynxDB is a massively parallel processing (MPP) database server with an architecture specially designed to manage large-scale analytic data warehouses and business intelligence workloads.

MPP (also known as a shared nothing architecture) refers to systems with two or more processors that cooperate to carry out an operation, each processor with its own memory, operating system and disks. SynxDB uses this high-performance system architecture to distribute the load of multi-terabyte data warehouses, and can use all of a system’s resources in parallel to process a query.

SynxDB is based on PostgreSQL open-source technology. It is essentially several PostgreSQL disk-oriented database instances acting together as one cohesive database management system (DBMS). It is based on PostgreSQL 9.4, and in most cases is very similar to PostgreSQL with regard to SQL support, features, configuration options, and end-user functionality. Database users interact with SynxDB as they would with a regular PostgreSQL DBMS.

SynxDB can use the append-optimized (AO) storage format for bulk loading and reading of data, and provides performance advantages over HEAP tables. Append-optimized storage provides checksums for data protection, compression and row/column orientation. Both row-oriented or column-oriented append-optimized tables can be compressed.

The main differences between SynxDB and PostgreSQL are as follows:

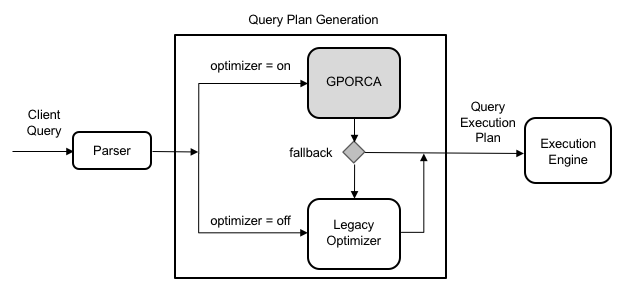

- GPORCA is leveraged for query planning, in addition to the Postgres Planner.

- SynxDB can use append-optimized storage.

- SynxDB has the option to use column storage, data that is logically organized as a table, using rows and columns that are physically stored in a column-oriented format, rather than as rows. Column storage can only be used with append-optimized tables. Column storage is compressible. It also can provide performance improvements as you only need to return the columns of interest to you. All compression algorithms can be used with either row or column-oriented tables, but Run-Length Encoded (RLE) compression can only be used with column-oriented tables. SynxDB provides compression on all Append-Optimized tables that use column storage.

The internals of PostgreSQL have been modified or supplemented to support the parallel structure of SynxDB. For example, the system catalog, optimizer, query executor, and transaction manager components have been modified and enhanced to be able to run queries simultaneously across all of the parallel PostgreSQL database instances. The SynxDB interconnect (the networking layer) enables communication between the distinct PostgreSQL instances and allows the system to behave as one logical database.

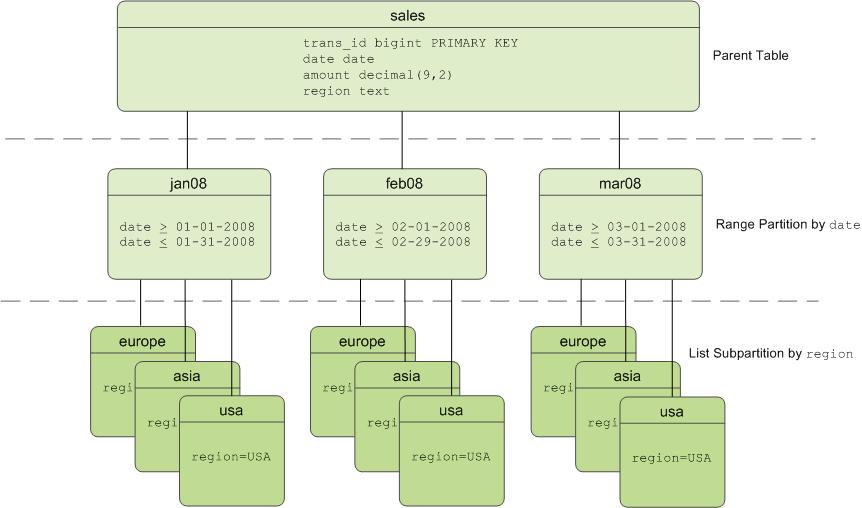

SynxDB also can use declarative partitions and sub-partitions to implicitly generate partition constraints.

SynxDB also includes features designed to optimize PostgreSQL for business intelligence (BI) workloads. For example, SynxDB has added parallel data loading (external tables), resource management, query optimizations, and storage enhancements, which are not found in standard PostgreSQL. Many features and optimizations developed by SynxDB make their way into the PostgreSQL community. For example, table partitioning is a feature first developed by SynxDB, and it is now in standard PostgreSQL.

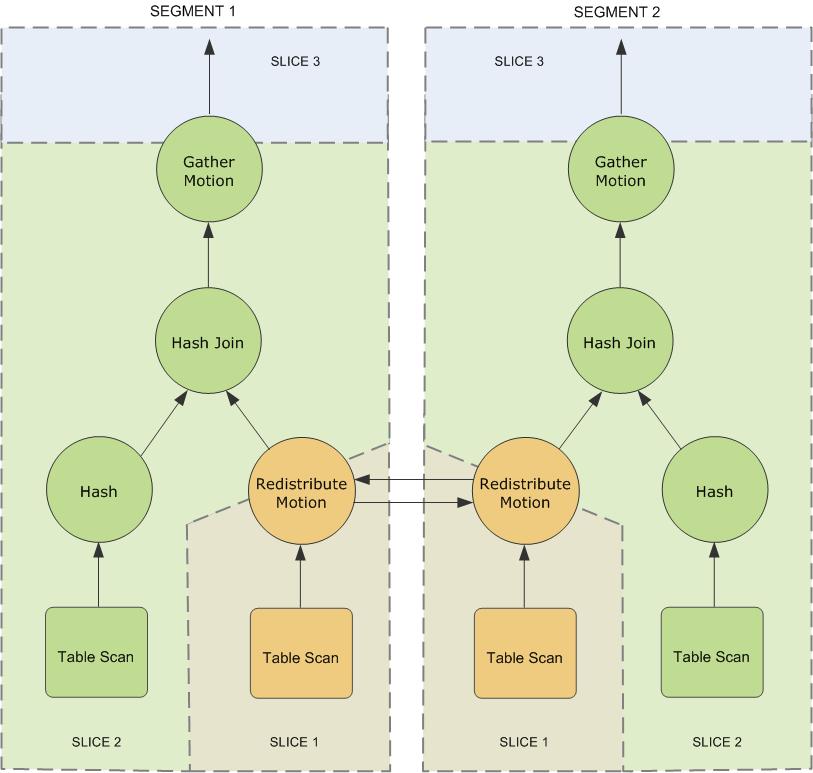

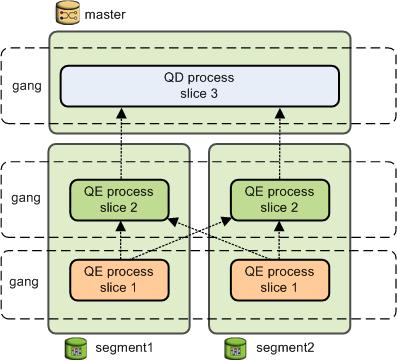

SynxDB queries use a Volcano-style query engine model, where the execution engine takes an execution plan and uses it to generate a tree of physical operators, evaluates tables through physical operators, and delivers results in a query response.

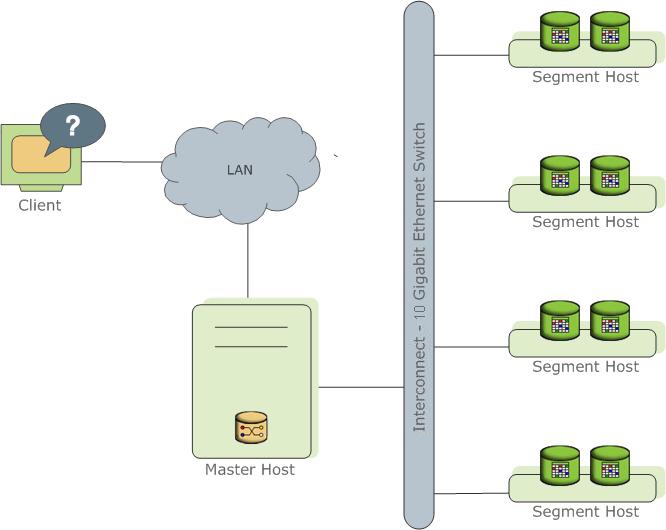

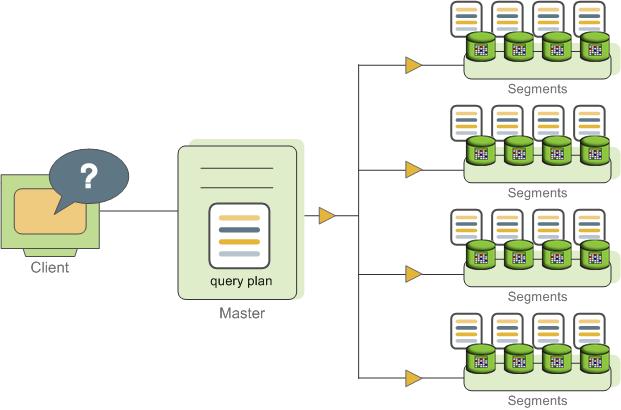

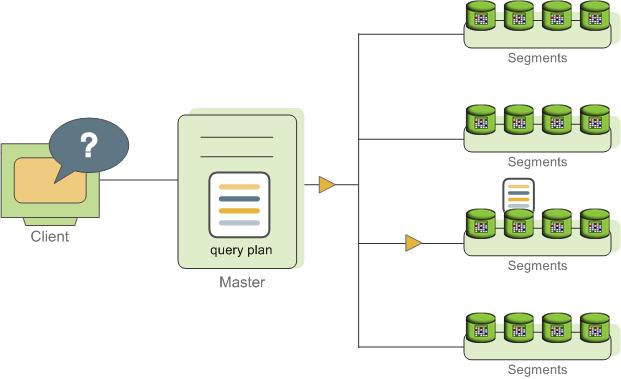

SynxDB stores and processes large amounts of data by distributing the data and processing workload across several servers or hosts. SynxDB is an array of individual databases based upon PostgreSQL 9.4 working together to present a single database image. The master is the entry point to the SynxDB system. It is the database instance to which clients connect and submit SQL statements. The master coordinates its work with the other database instances in the system, called segments, which store and process the data.

The following topics describe the components that make up a SynxDB system and how they work together.

- About the SynxDB Master

- About the SynxDB Segments

- About the SynxDB Interconnect

- About ETL Hosts for Data Loading

About the SynxDB Master

The SynxDB master is the entry to the SynxDB system, accepting client connections and SQL queries, and distributing work to the segment instances.

SynxDB end-users interact with SynxDB (through the master) as they would with a typical PostgreSQL database. They connect to the database using client programs such as psql or application programming interfaces (APIs) such as JDBC, ODBC or libpq (the PostgreSQL C API).

The master is where the global system catalog resides. The global system catalog is the set of system tables that contain metadata about the SynxDB system itself. The master does not contain any user data; data resides only on the segments. The master authenticates client connections, processes incoming SQL commands, distributes workloads among segments, coordinates the results returned by each segment, and presents the final results to the client program.

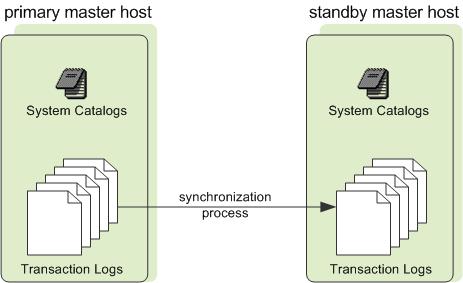

SynxDB uses Write-Ahead Logging (WAL) for master/standby master mirroring. In WAL-based logging, all modifications are written to the log before being applied, to ensure data integrity for any in-process operations.

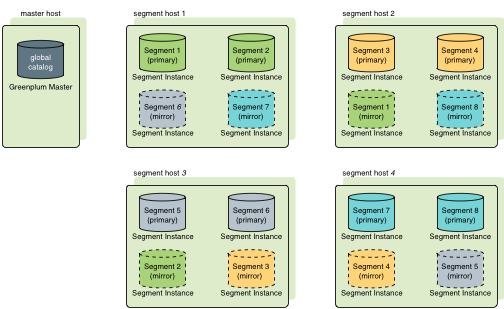

Master Redundancy

You may optionally deploy a backup or mirror of the master instance. A backup master host serves as a warm standby if the primary master host becomes nonoperational. You can deploy the standby master on a designated redundant master host or on one of the segment hosts.

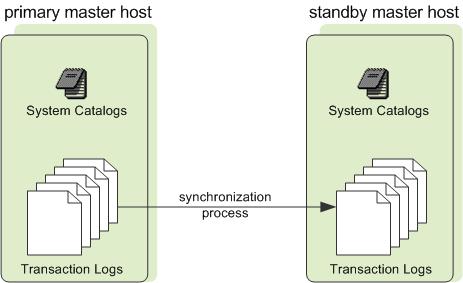

The standby master is kept up to date by a transaction log replication process, which runs on the standby master host and synchronizes the data between the primary and standby master hosts. If the primary master fails, the log replication process shuts down, and an administrator can activate the standby master in its place. When the standby master is active, the replicated logs are used to reconstruct the state of the master host at the time of the last successfully committed transaction.

Since the master does not contain any user data, only the system catalog tables need to be synchronized between the primary and backup copies. When these tables are updated, changes automatically copy over to the standby master so it is always synchronized with the primary.

About the SynxDB Segments

SynxDB segment instances are independent PostgreSQL databases that each store a portion of the data and perform the majority of query processing.

When a user connects to the database via the SynxDB master and issues a query, processes are created in each segment database to handle the work of that query. For more information about query processes, see About SynxDB Query Processing.

User-defined tables and their indexes are distributed across the available segments in a SynxDB system; each segment contains a distinct portion of data. The database server processes that serve segment data run under the corresponding segment instances. Users interact with segments in a SynxDB system through the master.

A server that runs a segment instance is called a segment host. A segment host typically runs from two to eight SynxDB segments, depending on the CPU cores, RAM, storage, network interfaces, and workloads. Segment hosts are expected to be identically configured. The key to obtaining the best performance from SynxDB is to distribute data and workloads evenly across a large number of equally capable segments so that all segments begin working on a task simultaneously and complete their work at the same time.

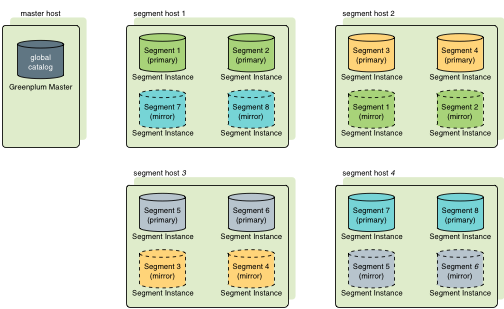

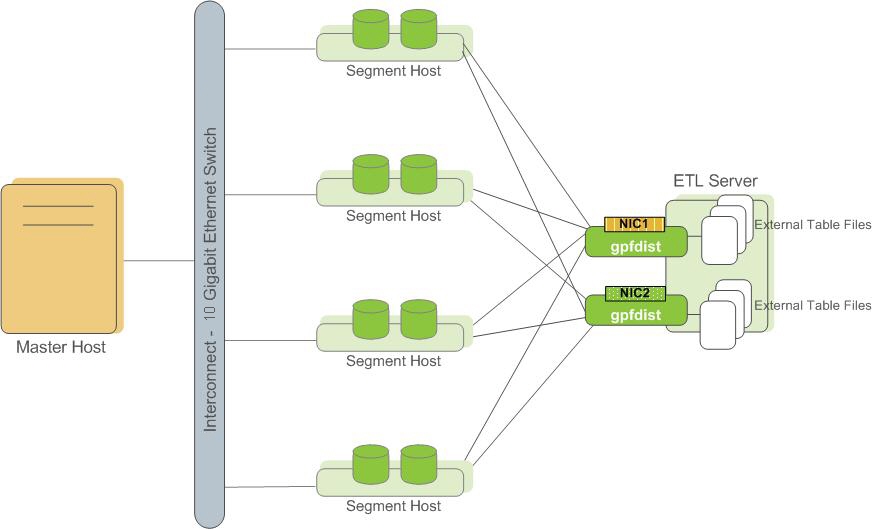

Segment Redundancy

When you deploy your SynxDB system, you have the option to configure mirror segments. Mirror segments allow database queries to fail over to a backup segment if the primary segment becomes unavailable. Mirroring is a requirement for production SynxDB systems.

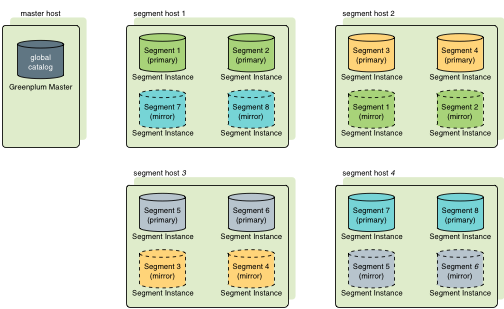

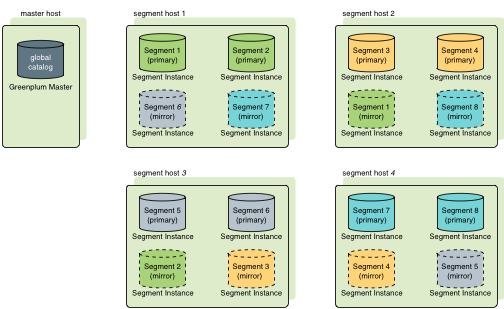

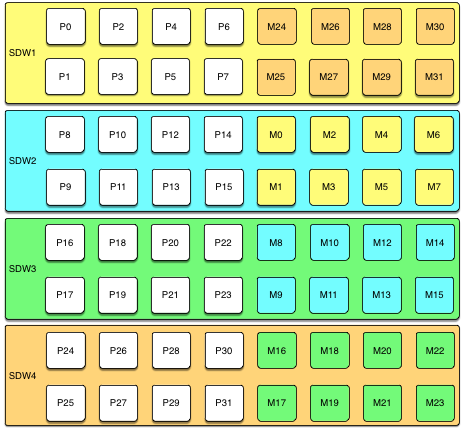

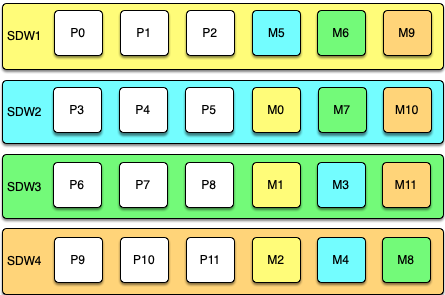

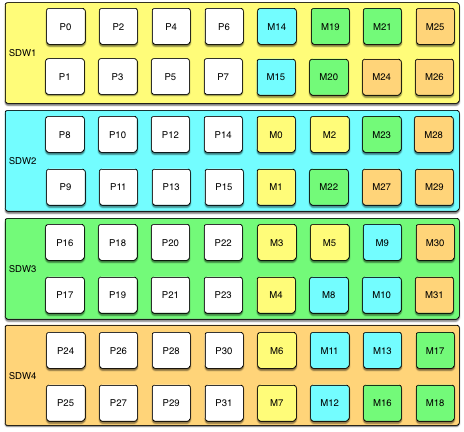

A mirror segment must always reside on a different host than its primary segment. Mirror segments can be arranged across the hosts in the system in one of two standard configurations, or in a custom configuration you design. The default configuration, called group mirroring, places the mirror segments for all primary segments on one other host. Another option, called spread mirroring, spreads mirrors for each host’s primary segments over the remaining hosts. Spread mirroring requires that there be more hosts in the system than there are primary segments on the host. On hosts with multiple network interfaces, the primary and mirror segments are distributed equally among the interfaces. This figure shows how table data is distributed across the segments when the default group mirroring option is configured:

Segment Failover and Recovery

When mirroring is enabled in a SynxDB system, the system automatically fails over to the mirror copy if a primary copy becomes unavailable. A SynxDB system can remain operational if a segment instance or host goes down only if all portions of data are available on the remaining active segments.

If the master cannot connect to a segment instance, it marks that segment instance as invalid in the SynxDB system catalog. The segment instance remains invalid and out of operation until an administrator brings that segment back online. An administrator can recover a failed segment while the system is up and running. The recovery process copies over only the changes that were missed while the segment was nonoperational.

If you do not have mirroring enabled and a segment becomes invalid, the system automatically shuts down. An administrator must recover all failed segments before operations can continue.

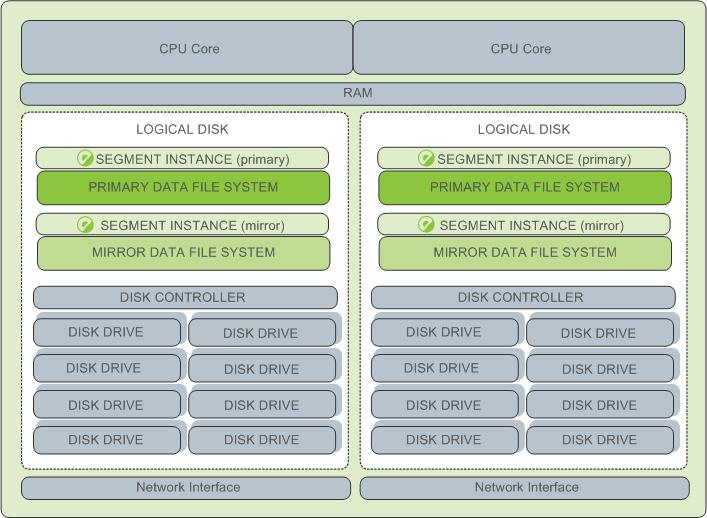

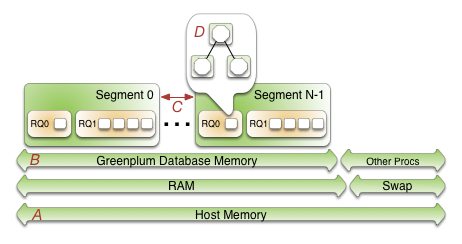

Example Segment Host Hardware Stack

Regardless of the hardware platform you choose, a production SynxDB processing node (a segment host) is typically configured as described in this section.

The segment hosts do the majority of database processing, so the segment host servers are configured in order to achieve the best performance possible from your SynxDB system. SynxDB’s performance will be as fast as the slowest segment server in the array. Therefore, it is important to ensure that the underlying hardware and operating systems that are running SynxDB are all running at their optimal performance level. It is also advised that all segment hosts in a SynxDB array have identical hardware resources and configurations.

Segment hosts should also be dedicated to SynxDB operations only. To get the best query performance, you do not want SynxDB competing with other applications for machine or network resources.

The following diagram shows an example SynxDB segment host hardware stack. The number of effective CPUs on a host is the basis for determining how many primary SynxDB segment instances to deploy per segment host. This example shows a host with two effective CPUs (one dual-core CPU). Note that there is one primary segment instance (or primary/mirror pair if using mirroring) per CPU core.

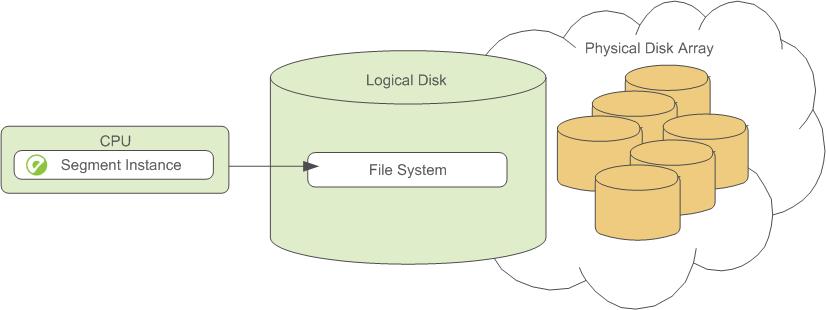

Example Segment Disk Layout

Each CPU is typically mapped to a logical disk. A logical disk consists of one primary file system (and optionally a mirror file system) accessing a pool of physical disks through an I/O channel or disk controller. The logical disk and file system are provided by the operating system. Most operating systems provide the ability for a logical disk drive to use groups of physical disks arranged in RAID arrays.

Depending on the hardware platform you choose, different RAID configurations offer different performance and capacity levels. SynxDB supports and certifies a number of reference hardware platforms and operating systems. Check with your sales account representative for the recommended configuration on your chosen platform.

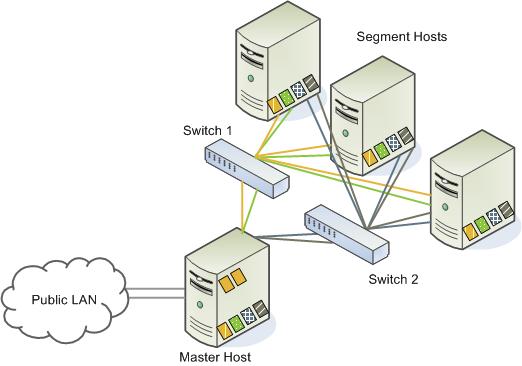

About the SynxDB Interconnect

The interconnect is the networking layer of the SynxDB architecture.

The interconnect refers to the inter-process communication between segments and the network infrastructure on which this communication relies. The SynxDB interconnect uses a standard Ethernet switching fabric. For performance reasons, a 10-Gigabit system, or faster, is recommended.

By default, the interconnect uses User Datagram Protocol with flow control (UDPIFC) for interconnect traffic to send messages over the network. The SynxDB software performs packet verification beyond what is provided by UDP. This means the reliability is equivalent to Transmission Control Protocol (TCP), and the performance and scalability exceeds TCP. If the interconnect is changed to TCP, SynxDB has a scalability limit of 1000 segment instances. With UDPIFC as the default protocol for the interconnect, this limit is not applicable.

Interconnect Redundancy

A highly available interconnect can be achieved by deploying dual 10 Gigabit Ethernet switches on your network, and redundant 10 Gigabit connections to the SynxDB master and segment host servers.

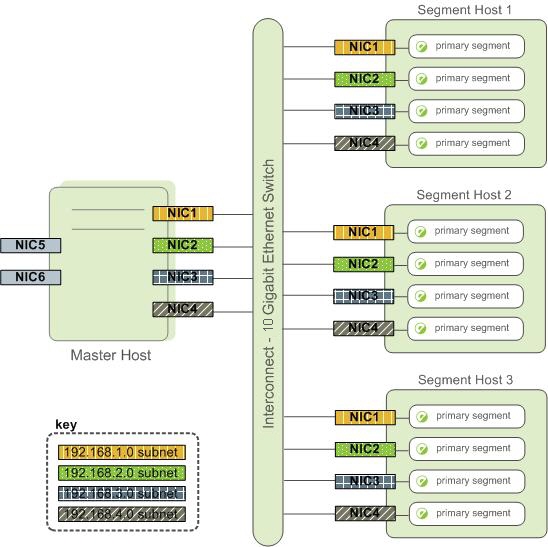

Network Interface Configuration

A segment host typically has multiple network interfaces designated to SynxDB interconnect traffic. The master host typically has additional external network interfaces in addition to the interfaces used for interconnect traffic.

Depending on the number of interfaces available, you will want to distribute interconnect network traffic across the number of available interfaces. This is done by assigning segment instances to a particular network interface and ensuring that the primary segments are evenly balanced over the number of available interfaces.

This is done by creating separate host address names for each network interface. For example, if a host has four network interfaces, then it would have four corresponding host addresses, each of which maps to one or more primary segment instances. The /etc/hosts file should be configured to contain not only the host name of each machine, but also all interface host addresses for all of the SynxDB hosts (master, standby master, segments, and ETL hosts).

With this configuration, the operating system automatically selects the best path to the destination. SynxDB automatically balances the network destinations to maximize parallelism.

Switch Configuration

When using multiple 10 Gigabit Ethernet switches within your SynxDB array, evenly divide the number of subnets between each switch. In this example configuration, if we had two switches, NICs 1 and 2 on each host would use switch 1 and NICs 3 and 4 on each host would use switch 2. For the master host, the host name bound to NIC 1 (and therefore using switch 1) is the effective master host name for the array. Therefore, if deploying a warm standby master for redundancy purposes, the standby master should map to a NIC that uses a different switch than the primary master.

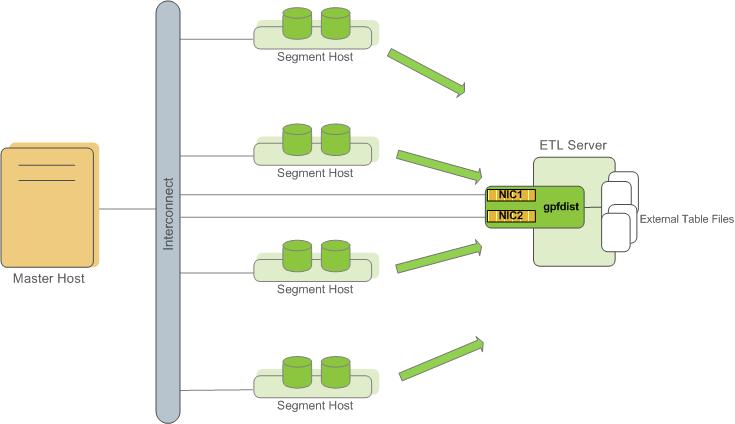

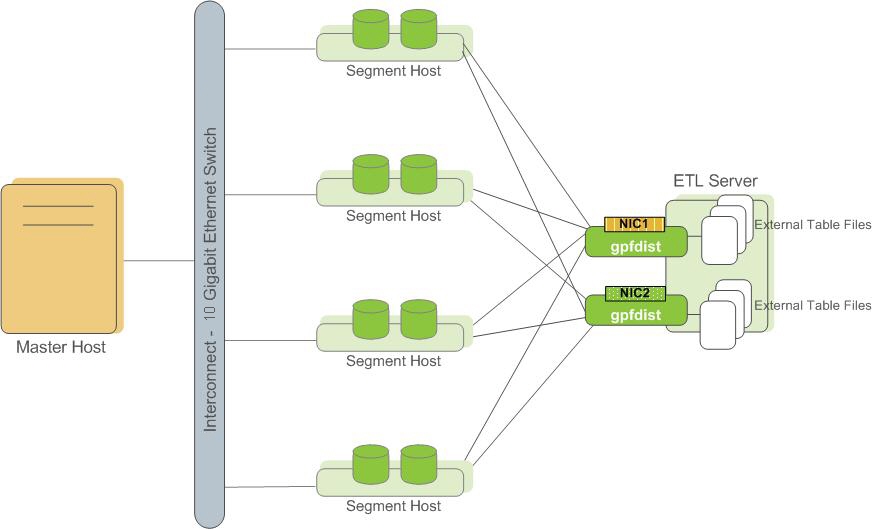

About ETL Hosts for Data Loading

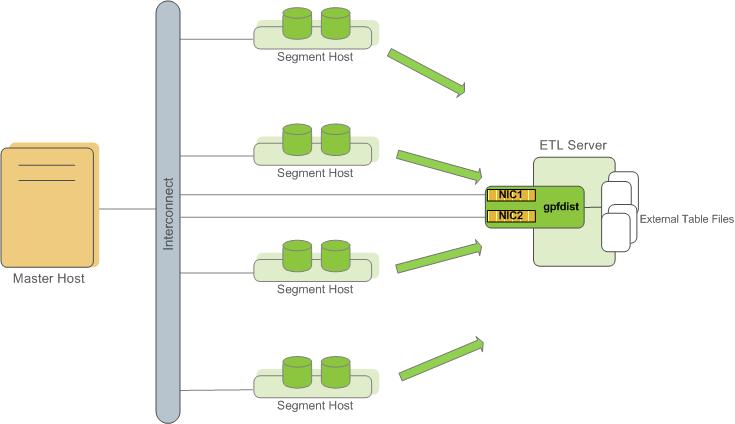

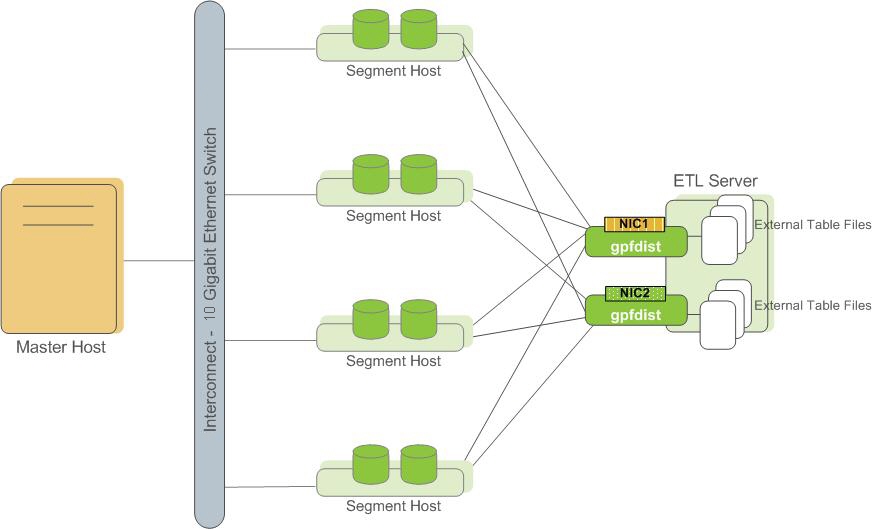

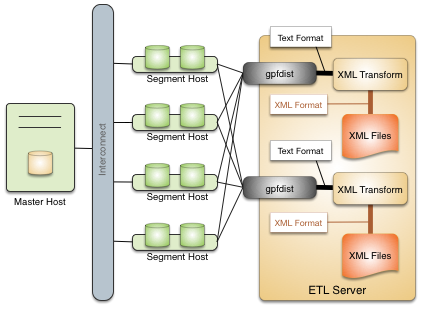

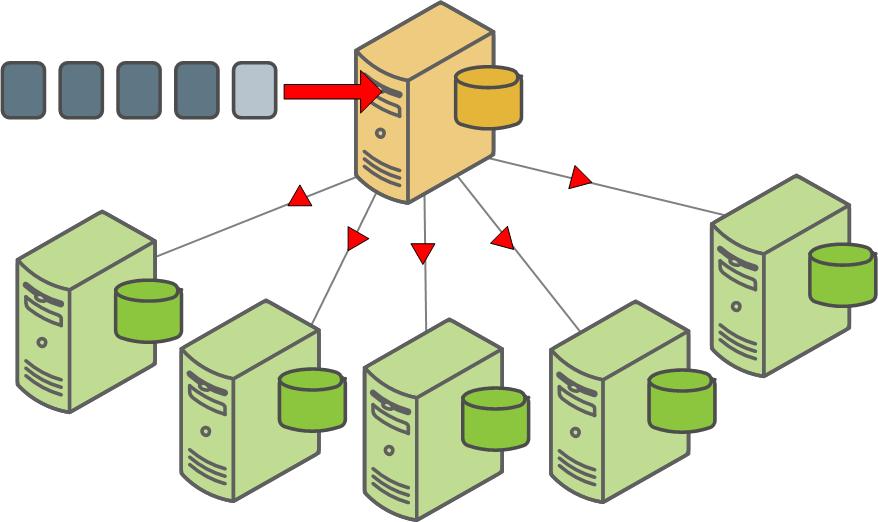

SynxDB supports fast, parallel data loading with its external tables feature. By using external tables in conjunction with SynxDB’s parallel file server (gpfdist), administrators can achieve maximum parallelism and load bandwidth from their SynxDB system. Many production systems deploy designated ETL servers for data loading purposes. These machines run the SynxDB parallel file server (gpfdist), but not SynxDB instances.

One advantage of using the gpfdist file server program is that it ensures that all of the segments in your SynxDB system are fully utilized when reading from external table data files.

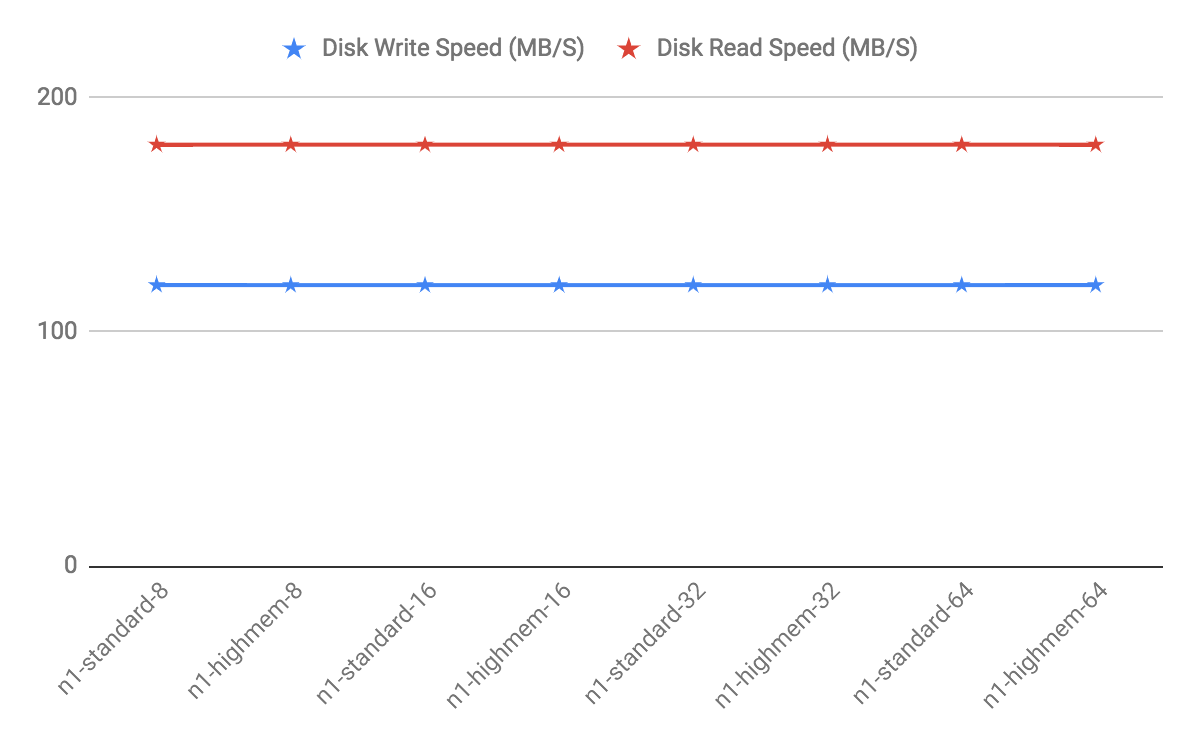

The gpfdist program can serve data to the segment instances at an average rate of about 350 MB/s for delimited text formatted files and 200 MB/s for CSV formatted files. Therefore, you should consider the following options when running gpfdist in order to maximize the network bandwidth of your ETL systems:

- If your ETL machine is configured with multiple network interface cards (NICs) as described in Network Interface Configuration, run one instance of

gpfdiston your ETL host and then define your external table definition so that the host name of each NIC is declared in theLOCATIONclause (seeCREATE EXTERNAL TABLEin the SynxDB Reference Guide). This allows network traffic between your SynxDB segment hosts and your ETL host to use all NICs simultaneously.

- Run multiple

gpfdistinstances on your ETL host and divide your external data files equally between each instance. For example, if you have an ETL system with two network interface cards (NICs), then you could run twogpfdistinstances on that machine to maximize your load performance. You would then divide the external table data files evenly between the twogpfdistprograms.

About Management and Monitoring Utilities

SynxDB provides standard command-line utilities for performing common monitoring and administration tasks.

SynxDB command-line utilities are located in the $GPHOME/bin directory and are run on the master host. SynxDB provides utilities for the following administration tasks:

- Installing SynxDB on an array

- Initializing a SynxDB System

- Starting and stopping SynxDB

- Adding or removing a host

- Expanding the array and redistributing tables among new segments

- Managing recovery for failed segment instances

- Managing failover and recovery for a failed master instance

- Backing up and restoring a database (in parallel)

- Loading data in parallel

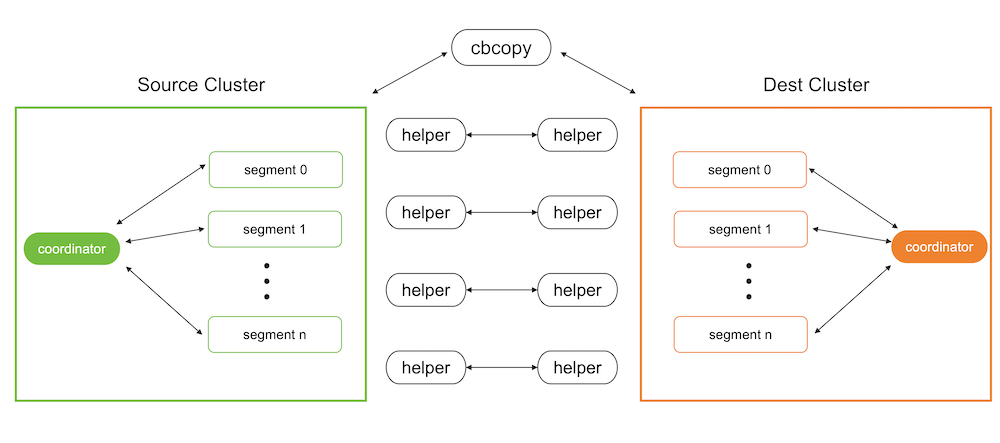

- Transferring data between SynxDB databases

- System state reporting

About Concurrency Control in SynxDB

SynxDB uses the PostgreSQL Multiversion Concurrency Control (MVCC) model to manage concurrent transactions for heap tables.

Concurrency control in a database management system allows concurrent queries to complete with correct results while ensuring the integrity of the database. Traditional databases use a two-phase locking protocol that prevents a transaction from modifying data that has been read by another concurrent transaction and prevents any concurrent transaction from reading or writing data that another transaction has updated. The locks required to coordinate transactions add contention to the database, reducing overall transaction throughput.

SynxDB uses the PostgreSQL Multiversion Concurrency Control (MVCC) model to manage concurrency for heap tables. With MVCC, each query operates on a snapshot of the database when the query starts. While it runs, a query cannot see changes made by other concurrent transactions. This ensures that a query sees a consistent view of the database. Queries that read rows can never block waiting for transactions that write rows. Conversely, queries that write rows cannot be blocked by transactions that read rows. This allows much greater concurrency than traditional database systems that employ locks to coordinate access between transactions that read and write data.

Note Append-optimized tables are managed with a different concurrency control model than the MVCC model discussed in this topic. They are intended for “write-once, read-many” applications that never, or only very rarely, perform row-level updates.

Snapshots

The MVCC model depends on the system’s ability to manage multiple versions of data rows. A query operates on a snapshot of the database at the start of the query. A snapshot is the set of rows that are visible at the beginning of a statement or transaction. The snapshot ensures the query has a consistent and valid view of the database for the duration of its execution.

Each transaction is assigned a unique transaction ID (XID), an incrementing 32-bit value. When a new transaction starts, it is assigned the next XID. An SQL statement that is not enclosed in a transaction is treated as a single-statement transaction—the BEGIN and COMMIT are added implicitly. This is similar to autocommit in some database systems.

Note SynxDB assigns XID values only to transactions that involve DDL or DML operations, which are typically the only transactions that require an XID.

When a transaction inserts a row, the XID is saved with the row in the xmin system column. When a transaction deletes a row, the XID is saved in the xmax system column. Updating a row is treated as a delete and an insert, so the XID is saved to the xmax of the current row and the xmin of the newly inserted row. The xmin and xmax columns, together with the transaction completion status, specify a range of transactions for which the version of the row is visible. A transaction can see the effects of all transactions less than xmin, which are guaranteed to be committed, but it cannot see the effects of any transaction greater than or equal to xmax.

Multi-statement transactions must also record which command within a transaction inserted a row (cmin) or deleted a row (cmax) so that the transaction can see changes made by previous commands in the transaction. The command sequence is only relevant during the transaction, so the sequence is reset to 0 at the beginning of a transaction.

XID is a property of the database. Each segment database has its own XID sequence that cannot be compared to the XIDs of other segment databases. The master coordinates distributed transactions with the segments using a cluster-wide session ID number, called gp_session_id. The segments maintain a mapping of distributed transaction IDs with their local XIDs. The master coordinates distributed transactions across all of the segment with the two-phase commit protocol. If a transaction fails on any one segment, it is rolled back on all segments.

You can see the xmin, xmax, cmin, and cmax columns for any row with a SELECT statement:

SELECT xmin, xmax, cmin, cmax, * FROM <tablename>;

Because you run the SELECT command on the master, the XIDs are the distributed transactions IDs. If you could run the command in an individual segment database, the xmin and xmax values would be the segment’s local XIDs.

Note SynxDB distributes all of a replicated table’s rows to every segment, so each row is duplicated on every segment. Each segment instance maintains its own values for the system columns

xmin,xmax,cmin, andcmax, as well as for thegp_segment_idandctidsystem columns. SynxDB does not permit user queries to access these system columns for replicated tables because they have no single, unambiguous value to evaluate in a query.

Transaction ID Wraparound

The MVCC model uses transaction IDs (XIDs) to determine which rows are visible at the beginning of a query or transaction. The XID is a 32-bit value, so a database could theoretically run over four billion transactions before the value overflows and wraps to zero. However, SynxDB uses modulo 232 arithmetic with XIDs, which allows the transaction IDs to wrap around, much as a clock wraps at twelve o’clock. For any given XID, there could be about two billion past XIDs and two billion future XIDs. This works until a version of a row persists through about two billion transactions, when it suddenly appears to be a new row. To prevent this, SynxDB has a special XID, called FrozenXID, which is always considered older than any regular XID it is compared with. The xmin of a row must be replaced with FrozenXID within two billion transactions, and this is one of the functions the VACUUM command performs.

Vacuuming the database at least every two billion transactions prevents XID wraparound. SynxDB monitors the transaction ID and warns if a VACUUM operation is required.

A warning is issued when a significant portion of the transaction IDs are no longer available and before transaction ID wraparound occurs:

WARNING: database "<database_name>" must be vacuumed within <number_of_transactions> transactions

When the warning is issued, a VACUUM operation is required. If a VACUUM operation is not performed, SynxDB stops creating transactions to avoid possible data loss when it reaches a limit prior to when transaction ID wraparound occurs and issues this error:

FATAL: database is not accepting commands to avoid wraparound data loss in database "<database_name>"

See Recovering from a Transaction ID Limit Error for the procedure to recover from this error.

The server configuration parameters xid_warn_limit and xid_stop_limit control when the warning and error are displayed. The xid_warn_limit parameter specifies the number of transaction IDs before the xid_stop_limit when the warning is issued. The xid_stop_limit parameter specifies the number of transaction IDs before wraparound would occur when the error is issued and new transactions cannot be created.

Transaction Isolation Levels

The SQL standard defines four levels of transaction isolation. The most strict is Serializable, which the standard defines as any concurrent execution of a set of Serializable transactions is guaranteed to produce the same effect as running them one at a time in some order. The other three levels are defined in terms of phenomena, resulting from interaction between concurrent transactions, which must not occur at each level. The standard notes that due to the definition of Serializable, none of these phenomena are possible at that level.

The phenomena which are prohibited at various levels are:

- dirty read – A transaction reads data written by a concurrent uncommitted transaction.

- non-repeatable read – A transaction re-reads data that it has previously read and finds that the data has been modified by another transaction (that committed since the initial read).

- phantom read – A transaction re-executes a query returning a set of rows that satisfy a search condition and finds that the set of rows satisfying the condition has changed due to another recently-committed transaction.

- serialization anomaly - The result of successfully committing a group of transactions is inconsistent with all possible orderings of running those transactions one at a time.

The four transaction isolation levels defined in the SQL standard and the corresponding behaviors are described in the table below.

| Isolation Level | Dirty Read | Non-Repeatable | Phantom Read | Serialization Anomoly |

|---|---|---|---|---|

READ UNCOMMITTED | Allowed, but not in SynxDB | Possible | Possible | Possible |

READ COMMITTED | Impossible | Possible | Possible | Possible |

REPEATABLE READ | Impossible | Impossible | Allowed, but not in SynxDB | Possible |

SERIALIZABLE | Impossible | Impossible | Impossible | Impossible |

SynxDB implements only two distinct transaction isolation levels, although you can request any of the four described levels. The SynxDB READ UNCOMMITTED level behaves like READ COMMITTED, and the SERIALIZABLE level falls back to REPEATABLE READ.

The table also shows that SynxDB’s REPEATABLE READ implementation does not allow phantom reads. This is acceptable under the SQL standard because the standard specifies which anomalies must not occur at certain isolation levels; higher guarantees are acceptable.

The following sections detail the behavior of the available isolation levels.

Important: Some SynxDB data types and functions have special rules regarding transactional behavior. In particular, changes made to a sequence (and therefore the counter of a column declared using serial) are immediately visible to all other transactions, and are not rolled back if the transaction that made the changes aborts.

Read Committed Isolation Level

The default isolation level in SynxDB is READ COMMITTED. When a transaction uses this isolation level, a SELECT query (without a FOR UPDATE/SHARE clause) sees only data committed before the query began; it never sees either uncommitted data or changes committed during query execution by concurrent transactions. In effect, a SELECT query sees a snapshot of the database at the instant the query begins to run. However, SELECT does see the effects of previous updates executed within its own transaction, even though they are not yet committed. Also note that two successive SELECT commands can see different data, even though they are within a single transaction, if other transactions commit changes after the first SELECT starts and before the second SELECT starts.

UPDATE, DELETE, SELECT FOR UPDATE, and SELECT FOR SHARE commands behave the same as SELECT in terms of searching for target rows: they find only the target rows that were committed as of the command start time. However, such a target row might have already been updated (or deleted or locked) by another concurrent transaction by the time it is found. In this case, the would-be updater waits for the first updating transaction to commit or roll back (if it is still in progress). If the first updater rolls back, then its effects are negated and the second updater can proceed with updating the originally found row. If the first updater commits, the second updater will ignore the row if the first updater deleted it, otherwise it will attempt to apply its operation to the updated version of the row. The search condition of the command (the WHERE clause) is re-evaluated to see if the updated version of the row still matches the search condition. If so, the second updater proceeds with its operation using the updated version of the row. In the case of SELECT FOR UPDATE and SELECT FOR SHARE, this means the updated version of the row is locked and returned to the client.

INSERT with an ON CONFLICT DO UPDATE clause behaves similarly. In READ COMMITTED mode, each row proposed for insertion will either insert or update. Unless there are unrelated errors, one of those two outcomes is guaranteed. If a conflict originates in another transaction whose effects are not yet visible to the INSERT , the UPDATE clause will affect that row, even though possibly no version of that row is conventionally visible to the command.

INSERT with an ON CONFLICT DO NOTHING clause may have insertion not proceed for a row due to the outcome of another transaction whose effects are not visible to the INSERT snapshot. Again, this is only the case in READ COMMITTED mode.

Because of the above rules, it is possible for an updating command to see an inconsistent snapshot: it can see the effects of concurrent updating commands on the same rows it is trying to update, but it does not see effects of those commands on other rows in the database. This behavior makes READ COMMITTED mode unsuitable for commands that involve complex search conditions; however, it is just right for simpler cases. For example, consider updating bank balances with transactions like:

BEGIN;

UPDATE accounts SET balance = balance + 100.00 WHERE acctnum = 12345;

UPDATE accounts SET balance = balance - 100.00 WHERE acctnum = 7534;

COMMIT;

If two such transactions concurrently try to change the balance of account 12345, we clearly want the second transaction to start with the updated version of the account’s row. Because each command is affecting only a predetermined row, letting it access the updated version of the row does not create any troublesome inconsistency.

More complex usage may produce undesirable results in READ COMMITTED mode. For example, consider a DELETE command operating on data that is being both added and removed from its restriction criteria by another command; assume website is a two-row table with website.hits equaling 9 and 10:

BEGIN;

UPDATE website SET hits = hits + 1;

-- run from another session: DELETE FROM website WHERE hits = 10;

COMMIT;

The DELETE will have no effect even though there is a website.hits = 10 row before and after the UPDATE. This occurs because the pre-update row value 9 is skipped, and when the UPDATE completes and DELETE obtains a lock, the new row value is no longer 10 but 11, which no longer matches the criteria.

Because READ COMMITTED mode starts each command with a new snapshot that includes all transactions committed up to that instant, subsequent commands in the same transaction will see the effects of the committed concurrent transaction in any case. The point at issue above is whether or not a single command sees an absolutely consistent view of the database.

The partial transaction isolation provided by READ COMMITTED mode is adequate for many applications, and this mode is fast and simple to use; however, it is not sufficient for all cases. Applications that do complex queries and updates might require a more rigorously consistent view of the database than READ COMMITTED mode provides.

Repeatable Read Isolation Level

The REPEATABLE READ isolation level only sees data committed before the transaction began; it never sees either uncommitted data or changes committed during transaction execution by concurrent transactions. (However, the query does see the effects of previous updates executed within its own transaction, even though they are not yet committed.) This is a stronger guarantee than is required by the SQL standard for this isolation level, and prevents all of the phenomena described in the table above. As mentioned previously, this is specifically allowed by the standard, which only describes the minimum protections each isolation level must provide.

The REPEATABLE READ isolation level is different from READ COMMITTED in that a query in a REPEATABLE READ transaction sees a snapshot as of the start of the first non-transaction-control statement in the transaction, not as of the start of the current statement within the transaction. Successive SELECT commands within a single transaction see the same data; they do not see changes made by other transactions that committed after their own transaction started.

Applications using this level must be prepared to retry transactions due to serialization failures.

UPDATE, DELETE, SELECT FOR UPDATE, and SELECT FOR SHARE commands behave the same as SELECT in terms of searching for target rows: they will only find target rows that were committed as of the transaction start time. However, such a target row might have already been updated (or deleted or locked) by another concurrent transaction by the time it is found. In this case, the REPEATABLE READ transaction will wait for the first updating transaction to commit or roll back (if it is still in progress). If the first updater rolls back, then its effects are negated and the REPEATABLE READ can proceed with updating the originally found row. But if the first updater commits (and actually updated or deleted the row, not just locked it), then SynxDB rolls back the REPEATABLE READ transaction with the message:

ERROR: could not serialize access due to concurrent update

because a REPEATABLE READ transaction cannot modify or lock rows changed by other transactions after the REPEATABLE READ transaction began.

When an application receives this error message, it should abort the current transaction and retry the whole transaction from the beginning. The second time through, the transaction will see the previously-committed change as part of its initial view of the database, so there is no logical conflict in using the new version of the row as the starting point for the new transaction’s update.

Note that you may need to retry only updating transactions; read-only transactions will never have serialization conflicts.

The REPEATABLE READ mode provides a rigorous guarantee that each transaction sees a completely stable view of the database. However, this view will not necessarily always be consistent with some serial (one at a time) execution of concurrent transactions of the same level. For example, even a read-only transaction at this level may see a control record updated to show that a batch has been completed but not see one of the detail records which is logically part of the batch because it read an earlier revision of the control record. Attempts to enforce business rules by transactions running at this isolation level are not likely to work correctly without careful use of explicit locks to block conflicting transactions.

Serializable Isolation Level

The SERIALIZABLE level, which SynxDB does not fully support, guarantees that a set of transactions run concurrently produces the same result as if the transactions ran sequentially one after the other. If SERIALIZABLE is specified, SynxDB falls back to REPEATABLE READ. The MVCC Snapshot Isolation (SI) model prevents dirty reads, non-repeatable reads, and phantom reads without expensive locking, but there are other interactions that can occur between some SERIALIZABLE transactions in SynxDB that prevent them from being truly serializable. These anomalies can often be attributed to the fact that SynxDB does not perform predicate locking, which means that a write in one transaction can affect the result of a previous read in another concurrent transaction.

About Setting the Transaction Isolation Level

The default transaction isolation level for SynxDB is specified by the default_transaction_isolation server configuration parameter, and is initially READ COMMITTED.

When you set default_transaction_isolation in a session, you specify the default transaction isolation level for all transactions in the session.

To set the isolation level for the current transaction, you can use the SET TRANSACTION SQL command. Be sure to set the isolation level before any SELECT, INSERT, DELETE, UPDATE, or COPY statement:

BEGIN;

SET TRANSACTION ISOLATION LEVEL REPEATABLE READ;

...

COMMIT;

You can also specify the isolation mode in a BEGIN statement:

BEGIN TRANSACTION ISOLATION LEVEL REPEATABLE READ;

Removing Dead Rows from Tables

Updating or deleting a row leaves an expired version of the row in the table. When an expired row is no longer referenced by any active transactions, it can be removed and the space it occupied can be reused. The VACUUM command marks the space used by expired rows for reuse.

When expired rows accumulate in a table, the disk files must be extended to accommodate new rows. Performance degrades due to the increased disk I/O required to run queries. This condition is called bloat and it should be managed by regularly vacuuming tables.

The VACUUM command (without FULL) can run concurrently with other queries. It marks the space previously used by the expired rows as free, and updates the free space map. When SynxDB later needs space for new rows, it first consults the table’s free space map to find pages with available space. If none are found, new pages will be appended to the file.

VACUUM (without FULL) does not consolidate pages or reduce the size of the table on disk. The space it recovers is only available through the free space map. To prevent disk files from growing, it is important to run VACUUM often enough. The frequency of required VACUUM runs depends on the frequency of updates and deletes in the table (inserts only ever add new rows). Heavily updated tables might require several VACUUM runs per day, to ensure that the available free space can be found through the free space map. It is also important to run VACUUM after running a transaction that updates or deletes a large number of rows.

The VACUUM FULL command rewrites the table without expired rows, reducing the table to its minimum size. Every page in the table is checked, and visible rows are moved up into pages which are not yet fully packed. Empty pages are discarded. The table is locked until VACUUM FULL completes. This is very expensive compared to the regular VACUUM command, and can be avoided or postponed by vacuuming regularly. It is best to run VACUUM FULL during a maintenance period. An alternative to VACUUM FULL is to recreate the table with a CREATE TABLE AS statement and then drop the old table.

You can run VACUUM VERBOSE tablename to get a report, by segment, of the number of dead rows removed, the number of pages affected, and the number of pages with usable free space.

Query the pg_class system table to find out how many pages a table is using across all segments. Be sure to ANALYZE the table first to get accurate data.

SELECT relname, relpages, reltuples FROM pg_class WHERE relname='<tablename>';

Another useful tool is the gp_bloat_diag view in the gp_toolkit schema, which identifies bloat in tables by comparing the actual number of pages used by a table to the expected number. See “The gp_toolkit Administrative Schema” in the SynxDB Reference Guide for more about gp_bloat_diag.

Example of Managing Transaction IDs

For SynxDB, the transaction ID (XID) value an incrementing 32-bit (232) value. The maximum unsigned 32-bit value is 4,294,967,295, or about four billion. The XID values restart at 3 after the maximum is reached. SynxDB handles the limit of XID values with two features:

-

Calculations on XID values using modulo-232 arithmetic that allow SynxDB to reuse XID values. The modulo calculations determine the order of transactions, whether one transaction has occurred before or after another, based on the XID.

Every XID value can have up to two billion (231) XID values that are considered previous transactions and two billion (231 -1 ) XID values that are considered newer transactions. The XID values can be considered a circular set of values with no endpoint similar to a 24 hour clock.

Using the SynxDB modulo calculations, as long as two XIDs are within 231 transactions of each other, comparing them yields the correct result.

-

A frozen XID value that SynxDB uses as the XID for current (visible) data rows. Setting a row’s XID to the frozen XID performs two functions.

- When SynxDB compares XIDs using the modulo calculations, the frozen XID is always smaller, earlier, when compared to any other XID. If a row’s XID is not set to the frozen XID and 231 new transactions are run, the row appears to be run in the future based on the modulo calculation.

- When the row’s XID is set to the frozen XID, the original XID can be used, without duplicating the XID. This keeps the number of data rows on disk with assigned XIDs below (232).

Note SynxDB assigns XID values only to transactions that involve DDL or DML operations, which are typically the only transactions that require an XID.

Simple MVCC Example

This is a simple example of the concepts of a MVCC database and how it manages data and transactions with transaction IDs. This simple MVCC database example consists of a single table:

- The table is a simple table with 2 columns and 4 rows of data.

- The valid transaction ID (XID) values are from 0 up to 9, after 9 the XID restarts at 0.

- The frozen XID is -2. This is different than the SynxDB frozen XID.

- Transactions are performed on a single row.

- Only insert and update operations are performed.

- All updated rows remain on disk, no operations are performed to remove obsolete rows.

The example only updates the amount values. No other changes to the table.

The example shows these concepts.

- How transaction IDs are used to manage multiple, simultaneous transactions on a table.

- How transaction IDs are managed with the frozen XID

- How the modulo calculation determines the order of transactions based on transaction IDs

Managing Simultaneous Transactions

This table is the initial table data on disk with no updates. The table contains two database columns for transaction IDs, xmin (transaction that created the row) and xmax (transaction that updated the row). In the table, changes are added, in order, to the bottom of the table.

| item | amount | xmin | xmax |

|---|---|---|---|

| widget | 100 | 0 | null |

| giblet | 200 | 1 | null |

| sprocket | 300 | 2 | null |

| gizmo | 400 | 3 | null |

The next table shows the table data on disk after some updates on the amount values have been performed.

- xid = 4:

update tbl set amount=208 where item = 'widget' - xid = 5:

update tbl set amount=133 where item = 'sprocket' - xid = 6:

update tbl set amount=16 where item = 'widget'

In the next table, the bold items are the current rows for the table. The other rows are obsolete rows, table data that on disk but is no longer current. Using the xmax value, you can determine the current rows of the table by selecting the rows with null value. SynxDB uses a slightly different method to determine current table rows.

| item | amount | xmin | xmax |

|---|---|---|---|

| widget | 100 | 0 | 4 |

| giblet | 200 | 1 | null |

| sprocket | 300 | 2 | 5 |

| gizmo | 400 | 3 | null |

| widget | 208 | 4 | 6 |

| sprocket | 133 | 5 | null |

| widget | 16 | 6 | null |

The simple MVCC database works with XID values to determine the state of the table. For example, both these independent transactions run concurrently.

UPDATEcommand changes the sprocket amount value to133(xmin value5)SELECTcommand returns the value of sprocket.

During the UPDATE transaction, the database returns the value of sprocket 300, until the UPDATE transaction completes.

Managing XIDs and the Frozen XID

For this simple example, the database is close to running out of available XID values. When SynxDB is close to running out of available XID values, SynxDB takes these actions.

-

SynxDB issues a warning stating that the database is running out of XID values.

WARNING: database "<database_name>" must be vacuumed within <number_of_transactions> transactions -

Before the last XID is assigned, SynxDB stops accepting transactions to prevent assigning an XID value twice and issues this message.

FATAL: database is not accepting commands to avoid wraparound data loss in database "<database_name>"

To manage transaction IDs and table data that is stored on disk, SynxDB provides the VACUUM command.

- A

VACUUMoperation frees up XID values so that a table can have more than 10 rows by changing the xmin values to the frozen XID. - A

VACUUMoperation manages obsolete or deleted table rows on disk. This database’sVACUUMcommand changes the XID valuesobsoleteto indicate obsolete rows. A SynxDBVACUUMoperation, without theFULLoption, deletes the data opportunistically to remove rows on disk with minimal impact to performance and data availability.

For the example table, a VACUUM operation has been performed on the table. The command updated table data on disk. This version of the VACUUM command performs slightly differently than the SynxDB command, but the concepts are the same.

-

For the widget and sprocket rows on disk that are no longer current, the rows have been marked as

obsolete. -

For the giblet and gizmo rows that are current, the xmin has been changed to the frozen XID.

The values are still current table values (the row’s xmax value is

null). However, the table row is visible to all transactions because the xmin value is frozen XID value that is older than all other XID values when modulo calculations are performed.

After the VACUUM operation, the XID values 0, 1, 2, and 3 available for use.

| item | amount | xmin | xmax |

|---|---|---|---|

| widget | 100 | obsolete | obsolete |

| giblet | 200 | -2 | null |

| sprocket | 300 | obsolete | obsolete |

| gizmo | 400 | -2 | null |

| widget | 208 | 4 | 6 |

| sprocket | 133 | 5 | null |

| widget | 16 | 6 | null |

When a row disk with the xmin value of -2 is updated, the xmax value is replaced with the transaction XID as usual, and the row on disk is considered obsolete after any concurrent transactions that access the row have completed.

Obsolete rows can be deleted from disk. For SynxDB, the VACUUM command, with FULL option, does more extensive processing to reclaim disk space.

Example of XID Modulo Calculations

The next table shows the table data on disk after more UPDATE transactions. The XID values have rolled over and start over at 0. No additional VACUUM operations have been performed.

| item | amount | xmin | xmax |

|---|---|---|---|

| widget | 100 | obsolete | obsolete |

| giblet | 200 | -2 | 1 |

| sprocket | 300 | obsolete | obsolete |

| gizmo | 400 | -2 | 9 |

| widget | 208 | 4 | 6 |

| sprocket | 133 | 5 | null |

| widget | 16 | 6 | 7 |

| widget | 222 | 7 | null |

| giblet | 233 | 8 | 0 |

| gizmo | 18 | 9 | null |

| giblet | 88 | 0 | 1 |

| giblet | 44 | 1 | null |

When performing the modulo calculations that compare XIDs, SynxDB, considers the XIDs of the rows and the current range of available XIDs to determine if XID wrapping has occurred between row XIDs.

For the example table XID wrapping has occurred. The XID 1 for giblet row is a later transaction than the XID 7 for widget row based on the modulo calculations for XID values even though the XID value 7 is larger than 1.

For the widget and sprocket rows, XID wrapping has not occurred and XID 7 is a later transaction than XID 5.

About Parallel Data Loading

This topic provides a short introduction to SynxDB data loading features.

In a large scale, multi-terabyte data warehouse, large amounts of data must be loaded within a relatively small maintenance window. SynxDB supports fast, parallel data loading with its external tables feature. Administrators can also load external tables in single row error isolation mode to filter bad rows into a separate error log while continuing to load properly formatted rows. Administrators can specify an error threshold for a load operation to control how many improperly formatted rows cause SynxDB to cancel the load operation.

By using external tables in conjunction with SynxDB’s parallel file server (gpfdist), administrators can achieve maximum parallelism and load bandwidth from their SynxDB system.

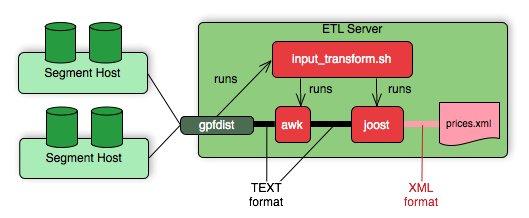

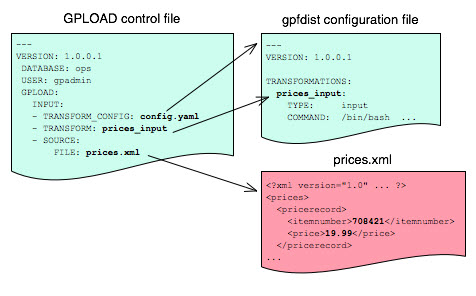

Another SynxDB utility, gpload, runs a load task that you specify in a YAML-formatted control file. You describe the source data locations, format, transformations required, participating hosts, database destinations, and other particulars in the control file and gpload runs the load. This allows you to describe a complex task and run it in a controlled, repeatable fashion.

About Redundancy and Failover in SynxDB

This topic provides a high-level overview of SynxDB high availability features.

You can deploy SynxDB without a single point of failure by mirroring components. The following sections describe the strategies for mirroring the main components of a SynxDB system. For a more detailed overview of SynxDB high availability features, see Overview of SynxDB High Availability.

Important When data loss is not acceptable for a SynxDB cluster, SynxDB master and segment mirroring is recommended. If mirroring is not enabled then SynxDB stores only one copy of the data, so the underlying storage media provides the only guarantee for data availability and correctness in the event of a hardware failure.

The SynxDB on vSphere virtualized environment ensures the enforcement of anti-affinity rules required for SynxDB mirroring solutions and fully supports mirrorless deployments. Other virtualized or containerized deployment environments are generally not supported for production use unless both SynxDB master and segment mirroring are enabled.

About Segment Mirroring

When you deploy your SynxDB system, you can configure mirror segment instances. Mirror segments allow database queries to fail over to a backup segment if the primary segment becomes unavailable. The mirror segment is kept current by a transaction log replication process, which synchronizes the data between the primary and mirror instances. Mirroring is strongly recommended for production systems and required for Synx Data Labs support.

As a best practice, the secondary (mirror) segment instance must always reside on a different host than its primary segment instance to protect against a single host failure. In virtualized environments, the secondary (mirror) segment must always reside on a different storage system than the primary. Mirror segments can be arranged over the remaining hosts in the cluster in configurations designed to maximize availability, or minimize the performance degradation when hosts or multiple primary segments fail.

Two standard mirroring configurations are available when you initialize or expand a SynxDB system. The default configuration, called group mirroring, places all the mirrors for a host’s primary segments on one other host in the cluster. The other standard configuration, spread mirroring, can be selected with a command-line option. Spread mirroring spreads each host’s mirrors over the remaining hosts and requires that there are more hosts in the cluster than primary segments per host.

Figure 1 shows how table data is distributed across segments when spread mirroring is configured.

Segment Failover and Recovery

When segment mirroring is enabled in a SynxDB system, the system will automatically fail over to the mirror segment instance if a primary segment instance becomes unavailable. A SynxDB system can remain operational if a segment instance or host goes down as long as all the data is available on the remaining active segment instances.

If the master cannot connect to a segment instance, it marks that segment instance as down in the SynxDB system catalog and brings up the mirror segment in its place. A failed segment instance will remain out of operation until an administrator takes steps to bring that segment back online. An administrator can recover a failed segment while the system is up and running. The recovery process copies over only the changes that were missed while the segment was out of operation.

If you do not have mirroring enabled, the system will automatically shut down if a segment instance becomes invalid. You must recover all failed segments before operations can continue.

About Master Mirroring

You can also optionally deploy a backup or mirror of the master instance on a separate host from the master host. The backup master instance (the standby master) serves as a warm standby in the event that the primary master host becomes non-operational. The standby master is kept current by a transaction log replication process, which synchronizes the data between the primary and standby master.

If the primary master fails, the log replication process stops, and the standby master can be activated in its place. The switchover does not happen automatically, but must be triggered externally. Upon activation of the standby master, the replicated logs are used to reconstruct the state of the master host at the time of the last successfully committed transaction. The activated standby master effectively becomes the SynxDB master, accepting client connections on the master port (which must be set to the same port number on the master host and the backup master host).

Since the master does not contain any user data, only the system catalog tables need to be synchronized between the primary and backup copies. When these tables are updated, changes are automatically copied over to the standby master to ensure synchronization with the primary master.

About Interconnect Redundancy

The interconnect refers to the inter-process communication between the segments and the network infrastructure on which this communication relies. You can achieve a highly available interconnect using by deploying dual Gigabit Ethernet switches on your network and redundant Gigabit connections to the SynxDB host (master and segment) servers. For performance reasons, 10-Gb Ethernet, or faster, is recommended.

About Database Statistics in SynxDB

An overview of statistics gathered by the ANALYZE command in SynxDB.